FAQ

Answers to the questions that come up most often when teams deploy, secure, and operate Simple Chat in Azure environments.

Use this page as the fast triage layer: it focuses on the recurring questions that show up after a team has already deployed or started hardening the environment.

Start with the category that matches the failure mode

Each card jumps to the section where the most likely causes and checks are grouped together.

Firewall and private endpoint issues

Start here when uploads, search, or admin actions break only after the app is placed behind a firewall, WAF, or private network boundary.

Authentication and access

Use this section when users cannot sign in, role assignments do not behave as expected, or model enumeration fails because of tenant boundaries.

Uploads and retrieval

Use this path when files fail during processing, indexes stay empty, or grounded answers are missing expected source material.

Model and endpoint configuration

Use this section when GPT, embeddings, DALL-E, or APIM-mode settings are the part of the system that is behaving unexpectedly.

Firewall and private endpoint questions

These issues usually surface after the app is moved behind network controls that only allow a subset of browser or outbound service traffic.

Q1 We put Simple Chat behind a firewall or WAF and uploads, search, or admin updates stopped working. The browser still needs to reach the backend API routes that power the app. API traffic Open answer

What to verify

- Simple Chat serves a frontend in the browser and a backend API from the app service, so blocking `/api/*` traffic breaks core features even when the page shell still loads.

- Allow browser-originated `GET`, `POST`, `PUT`, `PATCH`, and `DELETE` calls to your app service API routes.

- Use the repository OpenAPI specification under `artifacts/open_api/openapi.yaml` to understand which endpoints the frontend depends on.

Q2 Can I use Azure OpenAI and related services through private endpoints? Yes, but the app service must be able to resolve and reach those private addresses. Private networking Open answer

What to verify

- Integrate the app service with a VNet that can reach the private endpoints for Azure OpenAI, AI Search, Cosmos DB, Storage, and any other required service.

- Make sure private DNS zones or custom DNS resolve those endpoints to the expected private IP addresses.

- Validate outbound connectivity from the app service, not just from an admin workstation.

Authentication and access questions

When sign-in or model management fails, start by confirming the identity plane before you assume the app itself is broken.

Q3 Users are getting authentication errors or cannot log in. Most login failures come from app registration or assignment drift. Access checks Open answer

What to verify

- The redirect URI for `/.auth/login/aad/callback` is configured correctly in the Entra app registration.

- App Service Authentication is enabled, points at the correct app registration, and is set to require authentication.

- Users or groups are assigned to the enterprise application when assignment is required.

- Microsoft Graph permissions such as `User.Read`, `openid`, and `profile` are configured and have admin consent.

- `TENANT_ID` and `CLIENT_ID` values in the app settings match the intended tenant and application.

Q4 Fetch Models fails when the authentication app registration is in a different tenant than Azure OpenAI. The data plane can still work even when cross-tenant management-plane model listing is blocked. Cross-tenant Open answer

Workaround

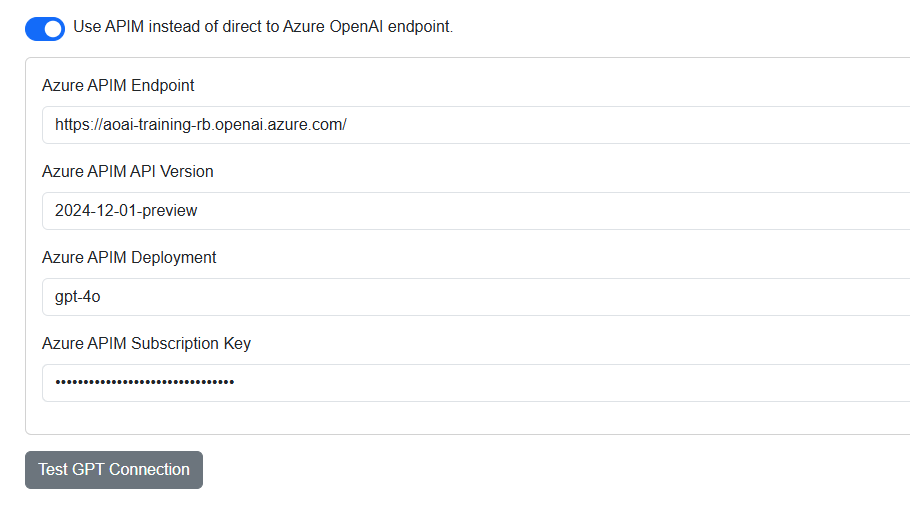

- In Admin Settings under GPT, enable Use APIM instead of direct to Azure OpenAI endpoint.

- Enter the Azure OpenAI endpoint URL, API version, and deployment name in the APIM fields instead of a true APIM proxy.

- Save the settings and fetch models again.

That route shifts model listing into the APIM-mode flow and avoids the cross-tenant management-plane enumeration failure.

Upload and search questions

These problems usually come from permission gaps, failed indexing, or missing model configuration for embeddings and extraction.

Q5 File uploads are failing. Start by checking the dependent services the pipeline writes to during ingestion. Uploads Open answer

What to verify

- The app service has permission to reach AI Search, Document Intelligence, Storage, Speech, Video Indexer, and any other enabled service in the upload path.

- Managed identity role assignments or configured keys are valid and match the services you enabled.

- Application Insights and App Service logs show whether the failure happens during extraction, embedding, indexing, or storage.

- File Processing Logs in Admin Settings can expose the exact stage that failed.

Q6 RAG is not returning expected results or any results. When search quality drops, verify indexing and embeddings before tuning prompts. Search Open answer

What to verify

- Uploaded documents finished processing successfully in the workspace UI.

- The relevant Azure AI Search index contains the documents you expect and its count increases after uploads.

- The embedding deployment is configured correctly and reachable from the application.

- The Search Documents toggle is enabled in the chat UI and the user question is phrased in a way that maps to indexed content.

Model management questions

These checks matter when the application loads but the configured AI capabilities do not behave the way you expect.

Q7 How do I update the GPT, embedding, or DALL-E models used by the application? Model selection is handled through admin configuration rather than code deployment. Model updates Open answer

What to do

- Open Admin Settings.

- Go to the GPT, Embeddings, or Image Generation section that matches the model you want to change.

- Fetch the available deployments from the configured Azure OpenAI endpoint and select the desired deployment name.

- Save the settings. A code redeploy is not required when the endpoint stays the same.